Explore our library of tools, guides, and insights to

take your knowledge and skills to the next level.

Over the last decade, we have seen the shift of enterprise applications that were once deployed on-premises being moved into the cloud. Given that the on-premises infrastructure was costly and hard to maintain coupled with the rise of cloud computing offering low-cost solutions to host the enterprise applications, it made perfect sense for enterprises to move to the cloud. We have seen the rise of hyperscale cloud providers like AWS, Azure Google,Alibaba and Oracle cloud during this decade.

However, recently with the advent of 5G and the ultra low latency requirements the applications demand like self-driving cars, remote surgery and many others, it is very critical to have the data processed at locations closer to the source to take real-time actions than being sent to the cloud which is farther from the source and will have high round trip time to take actions. Due to these reasons, we are witnessing a new revolution in the industry in the form of Edge Computing that is estimated to overcome cloud computing in the next 3-5 years. Along with the low latency requirements, data residency requirements enterprises to not cross the data beyond their local region/country is pushing the cloud providers to deploy the mini data centers at the edge locations.

Edge computing comes in many form factors like regional data centers, micro-modular data centers, streetside cabinets or Telco Edge Clouds (TEC). Each of them will solve a use case based on application design, latency, jitter and bandwidth requirements. While we can develop and deploy the Edge Clouds with our own software stack, it is very critical to participate and provide thought leadership in global forums like the GSMA TEC project which provided the Operator Platform Telco Edge proposal. Following these standards will ensure that we are in-line with Edge Computing infrastructure deployments and can interoperate with other Edge Computing providers in future to accommodate various applications like drones roaming across the city from one operator to another.

Edge Computing involves the critical infrastructure being deployed at multiple locations/cities closer to the business centers. This distributed edge computing infrastructure requires a very efficient Edge orchestrator to take into consideration the client use case requirements, perform the scheduling checks that suits and deploy the applications accordingly. Network or enterprise applications that are deployed will be provided as containers or virtual machines on different kinds of Hypervisors and the life cycle management of these applications are critical to ensure service assurance. It has to manage the network, compute and storage requirements globally. Hence, we would need an Edge Orchestrator built on open source technologies to take care of Edge Computing deployments at scale.

In this blog, we explore the definition of Edge Computing, compare it with cloud computing, forecast and regional deployment overview, priorities, critical infrastructure, software stack and orchestration requirements.

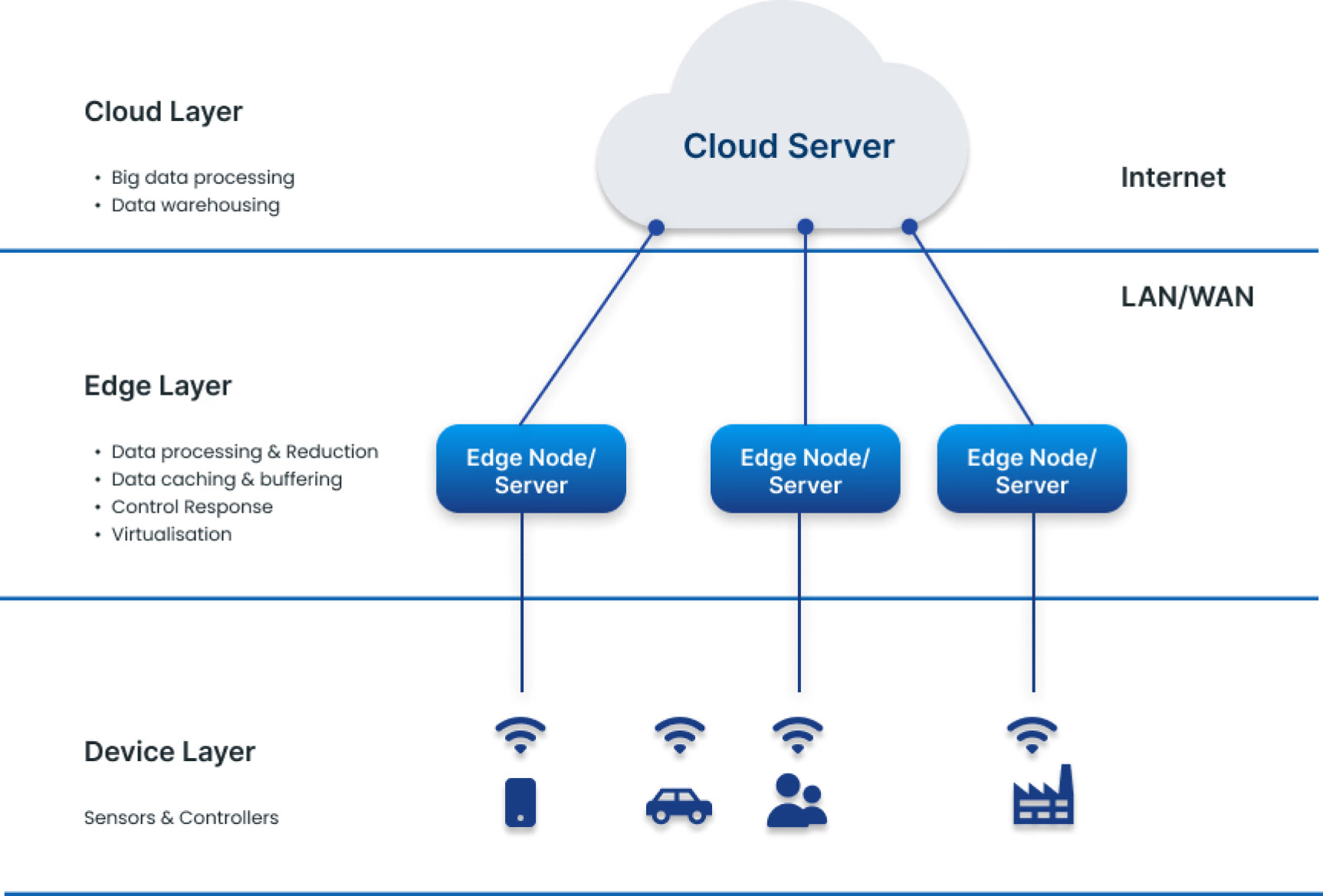

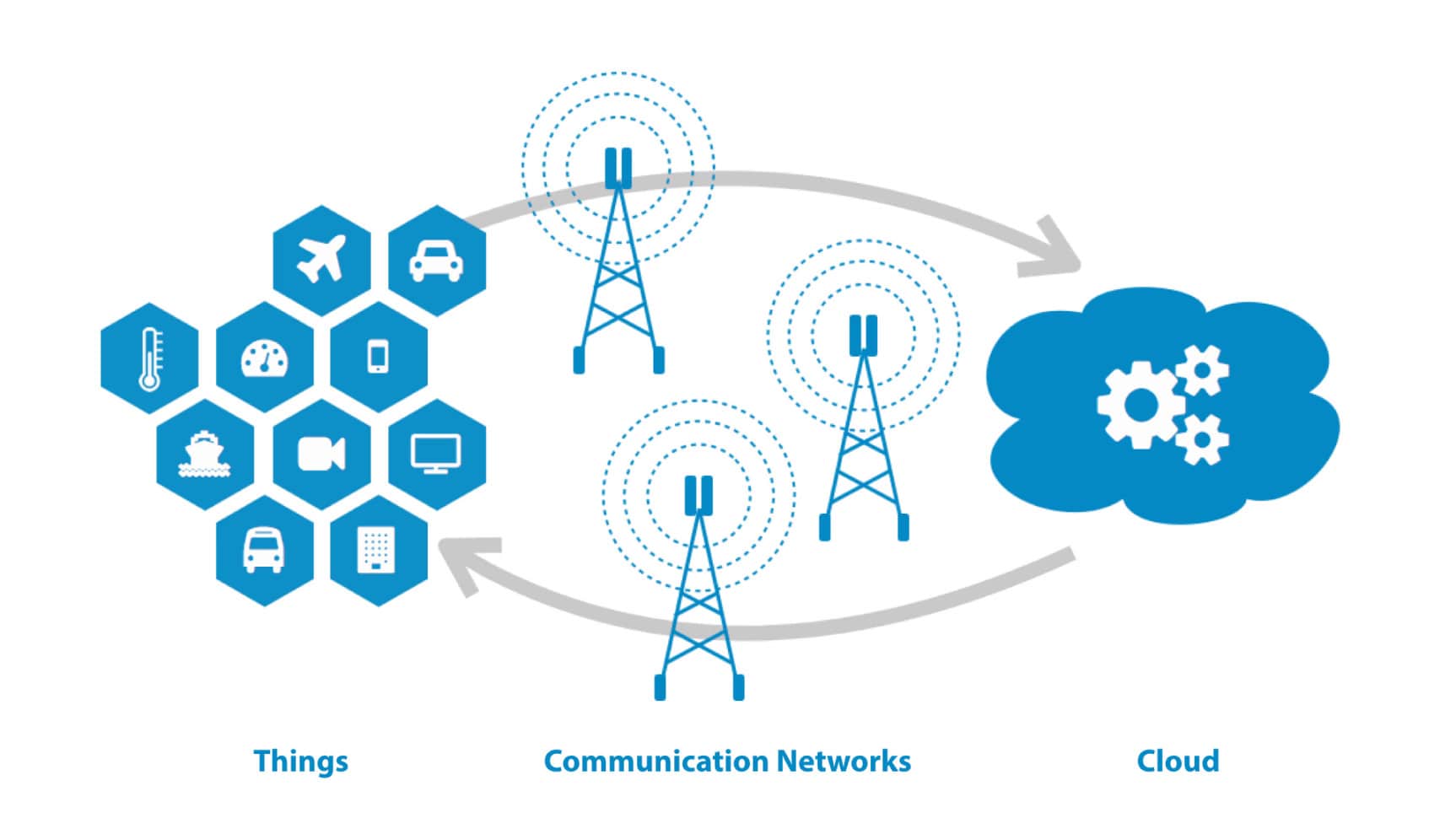

Edge computing is the practice of moving compute power physically closer to where data is generated, usually an Internet of Things device or sensor. Named for the way compute power is brought to the edge of the network or device, edge computing allows for faster data processing, increased bandwidth and ensured data sovereignty. By processing data at a network’s edge, edge computing reduces the need for large amounts of data to travel among servers, the cloud and devices or edge locations to get processed. This is particularly important for modern applications such as data science and AI.

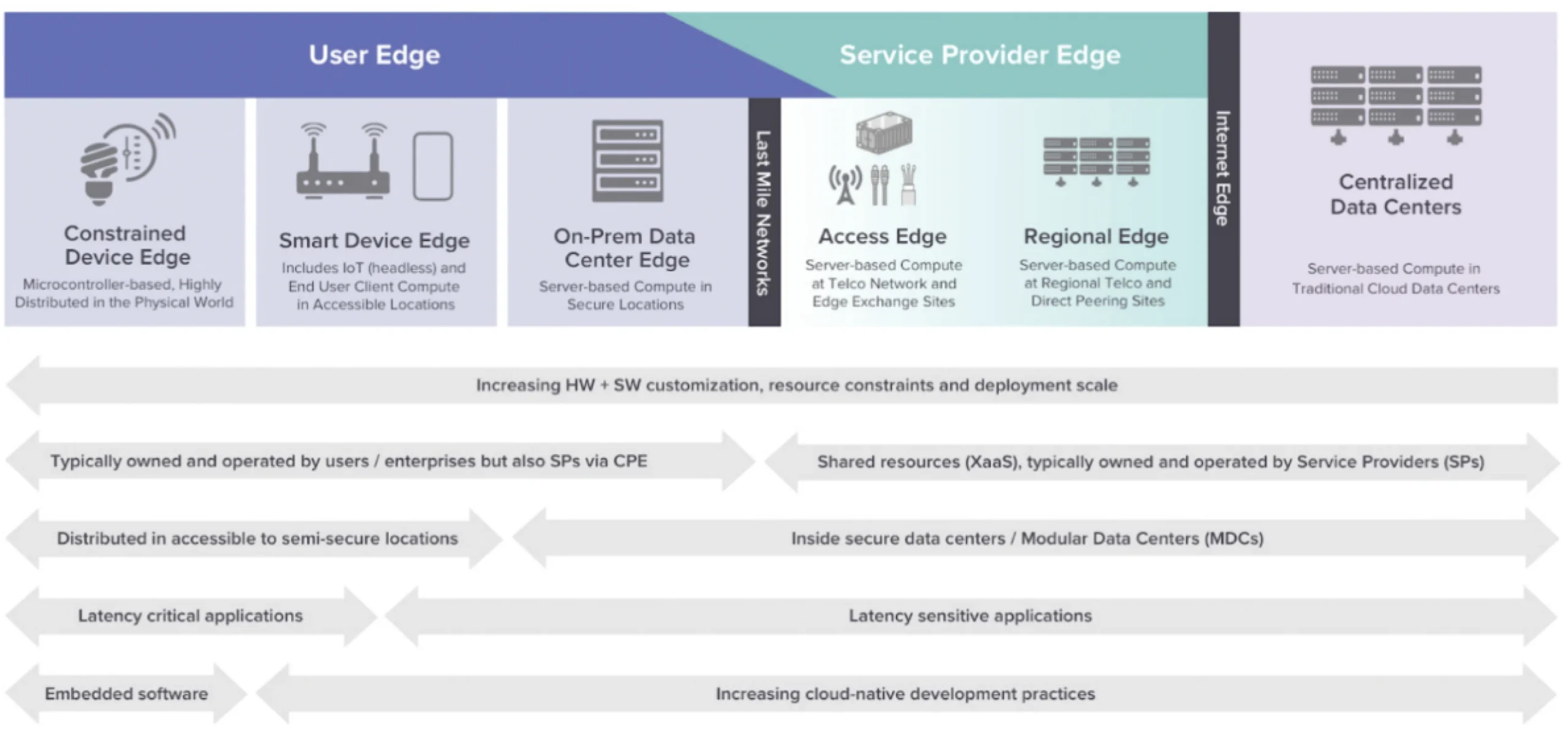

Although there are a variety of definitions for edge computing, The Linux Foundation’s LF Edge has created a formal taxonomy that has received many accolades and is getting widespread adoption. The LF Edge taxonomy visualizes edge computing through the continuum of physical infrastructure that comprises the internet, from centralized data centers to devices. By locating services at key points along this continuum, developers can better satisfy the latency requirements of their applications. The below figure summarizes the edge computing continuum, spanning from discrete distributed devices to centralized data centers, along with key trends that define the boundaries of each category. This includes the increasingly complex design tradeoffs that architects need to make the closer compute resources get to the physical world.

The LF Edge model focuses on the two main edge tiers that straddle the last mile networks, the “Service Provider Edge” and the “User Edge”, with each being further broken down into subcategories.

The User Edge consists of Self-contained end-point devices, such as smart-phones, wearables and automobiles, Gateway devices such as IoT aggregators, switching and routing devices and On-premises server platforms.

And the Service Provider Edge, which consists of compute platforms, which are co-located with:

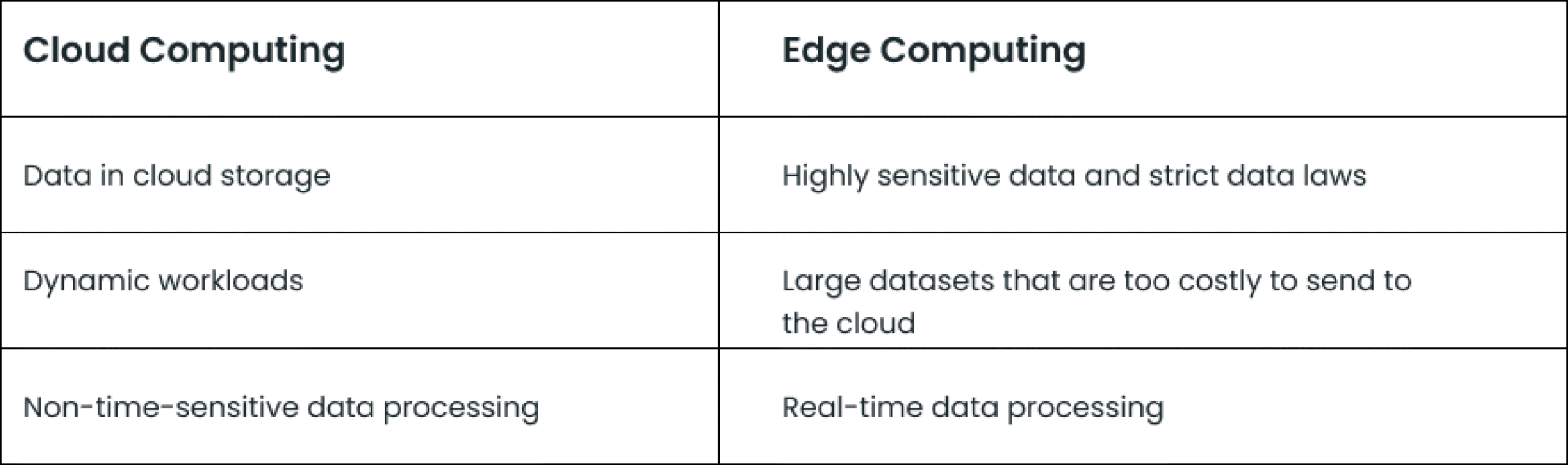

It is important to understand how Edge Computing is different from Cloud Computing. Are they competing technologies or complementary technologies?

Public cloud computing platforms allow enterprises to supplement their private data centers with global servers that extend their infrastructure to any location and allow them to scale computational resources up and down as needed. These hybrid public-private clouds offer unprecedented flexibility, value and security for enterprise computing applications.

However, low-latency applications running in real time throughout the world can require significant local processing power, often in remote locations too far from centralized cloud servers. And some workloads need to remain on premises or in a specific location due to low latency or data-residency requirements.

This is why many enterprises deploy their low-latency applications using edge computing, which refers to processing that happens where data is produced. Instead of cloud processing doing the work in a distant, centralised data reserve, edge computing handles and stores data locally in an edge device

Cloud and edge computing have a variety of benefits and use cases, and can work together.

Cloud computing is the delivery of computing services—including servers, storage, databases, networking, software, analytics, and intelligence—over the Internet (“the cloud”) to offer faster innovation, flexible resources, and economies of scale. Clients typically pay only for cloud services you use, helping lower the operating costs, run the infrastructure more efficiently and scale as the business needs change. Cloud computing has been around for almost 15 years now. There are 3 types of cloud like Public Cloud, Private Cloud and Hybrid Cloud deployments and 3 services like Infrastructure as a Service (IaaS), Platform as a Service (PaaS) and Software as a Service (SaaS).

Cloud computing adoption is only increasing. Here’s why enterprises have implemented cloud infrastructure and will continue to do so:

.

Edge and cloud computing have distinct features and most organizations will end up using both. Here are some considerations when looking at where to deploy different workloads.

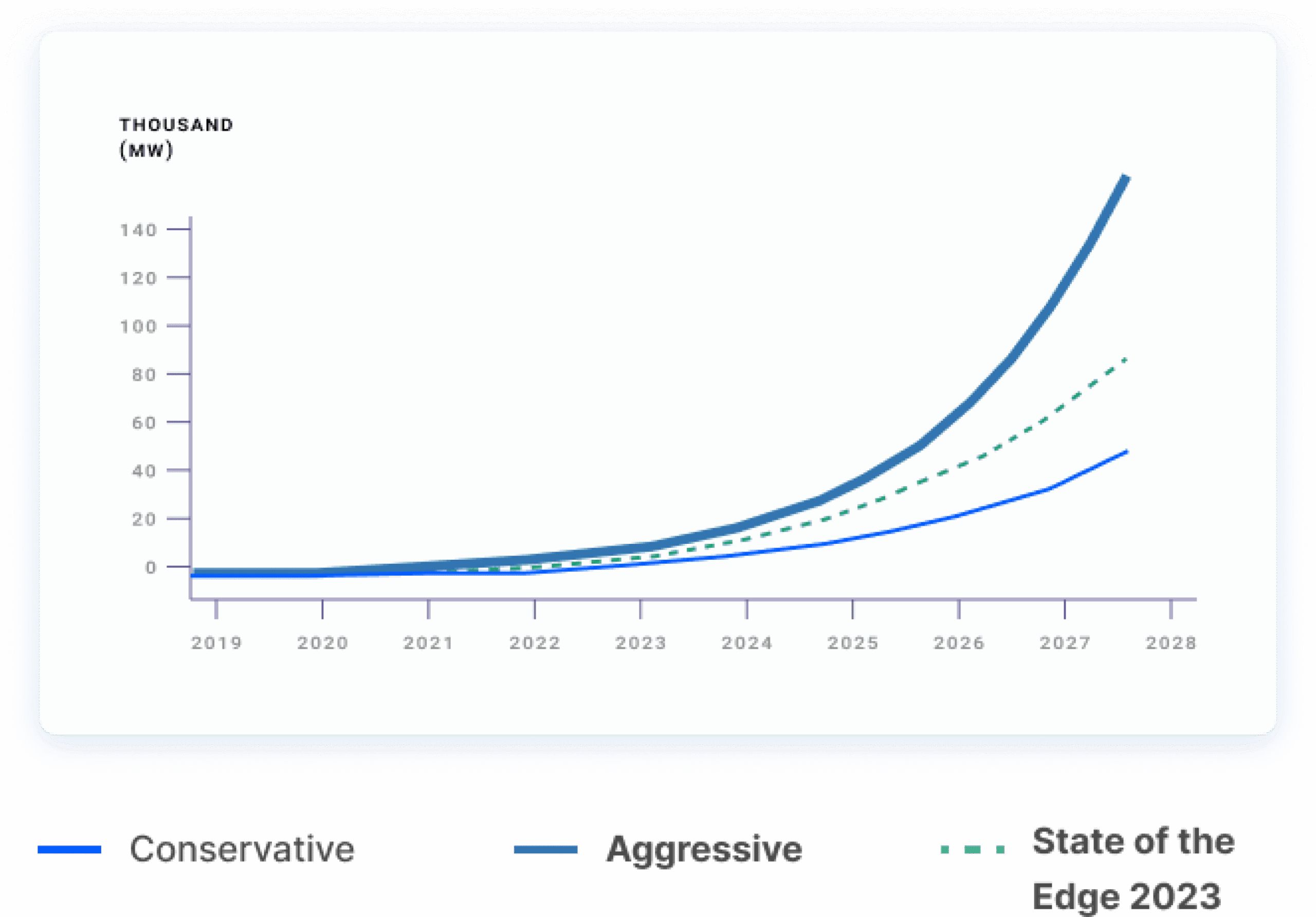

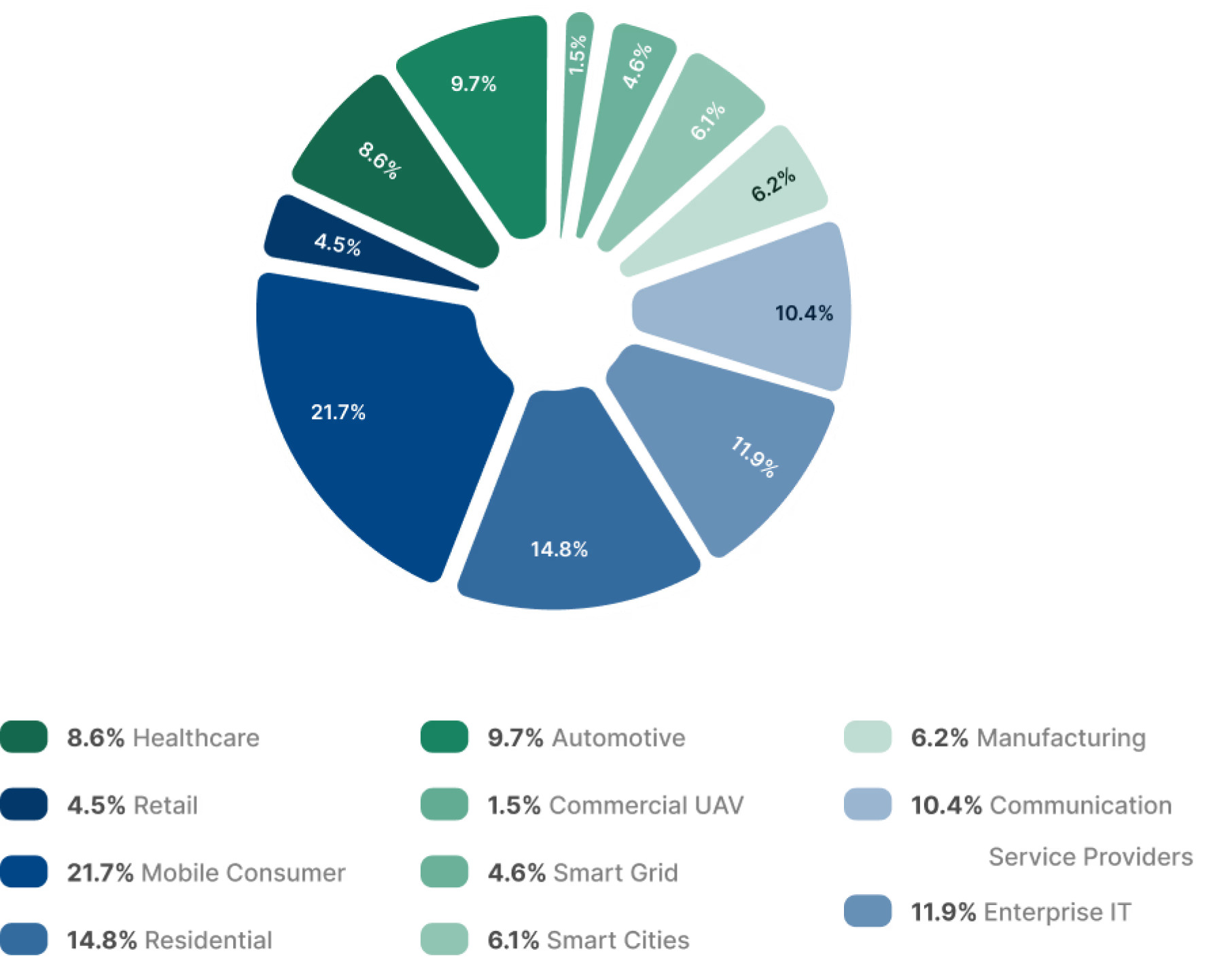

The forecast model focuses on 43 use cases spanning 11 verticals, including CSP, enterprise IT, residential and mobile consumer services, retail, healthcare, automotive, commercial UAV, smart grid, smart cities, and manufacturing. Other verticals, such as education and financial services and investments at the Device Edge will drive additional edge computing market opportunities that are not included in the forecast.

The forecast uses the power footprint of IT server equipment deployed at the Service Provider Edge as a primary measure to illustrate edge expansion. Server power footprint represents the rated power of all the equipment operating at the edge, though it does not represent actual electrical power consumed, which will be much less and depend on the power duty-cycle of the deployed equipment.

The COVID-19 pandemic has disrupted the global status quo and is heralding a world of digital “haves” and “have-nots”. There are some areas where digital services are thriving, and other areas, such as the travel industry, which are languishing. In aggregate though, the pandemic is accelerating digital transformation and service adoption. Consumers and enterprises are using digital solutions to overcome pandemic challenges, such as lockdowns, social distancing and fragile supply chains. After we get through the pandemic, permanent change is inevitable for use cases where consumers and enterprises find continued value. However, exactly which digital use cases will prevail is challenging to predict. To account for this uncertainty, both conservative and optimistic forecasts for the Infrastructure Edge have been developed. The conservative forecast has the global aggregate IT power footprint increasing from 1,078 MW in 2019 with a CAGR (Compound Annual Growth Rate) of 40% to reach 40,380 MW by 2028. The aggressive forecast results in a 70 percent CAGR to reach 120,840 MW by 2028.

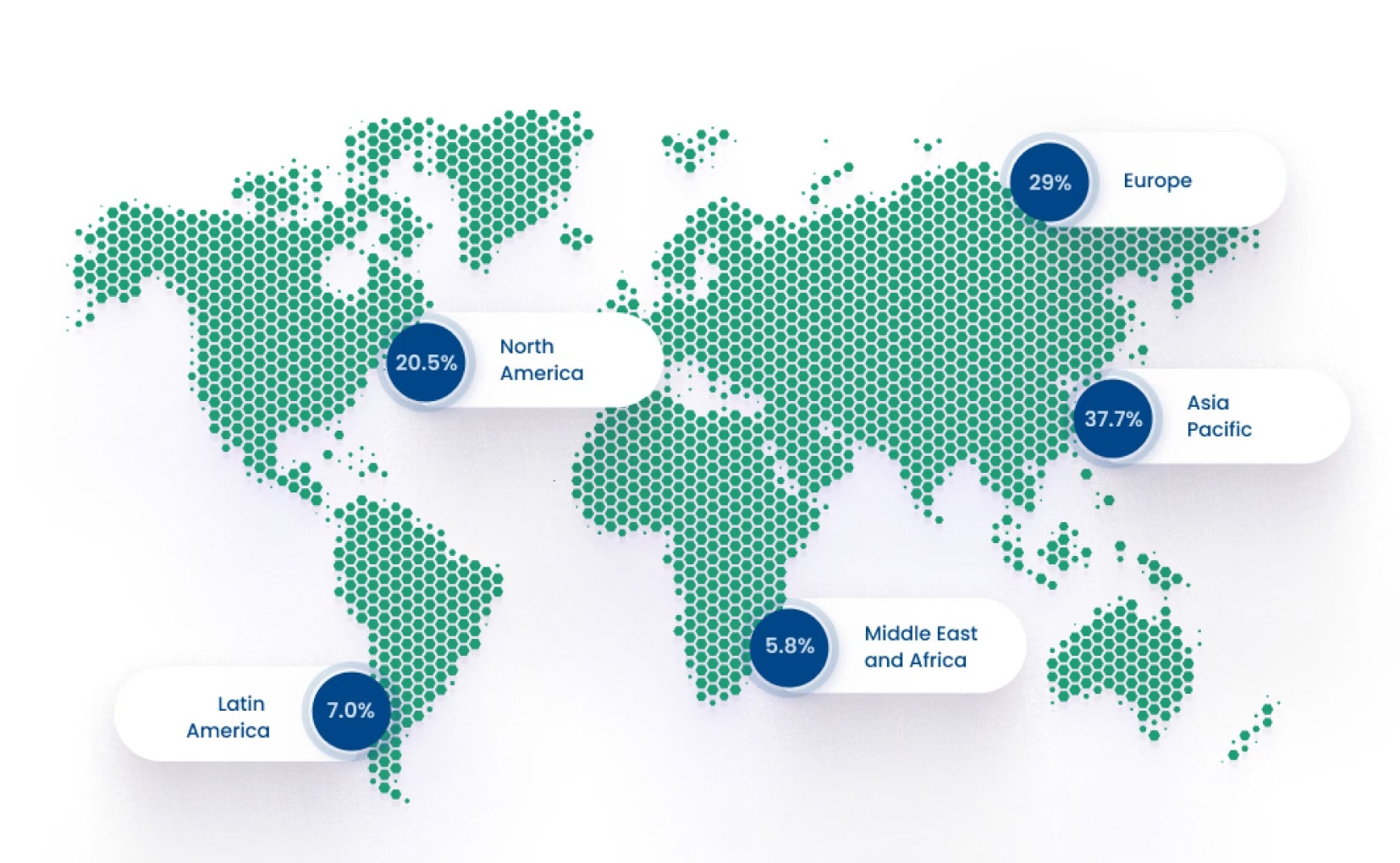

The Infrastructure Edge is expanding in all global regions. The pace of this expansion depends on a variety of macro-economic, geographical, geopolitical and economic factors that were assessed in the forecast. By 2028, it is forecasted that 37.7% of the global Infrastructure Edge footprint will be in Asia Pacific. Asia Pacific has tremendous diversity, spanning some of the poorest and most wealthy countries in the world. Countries including China, Japan and South Korea are predicted to be significant contributors to edge computing adoption in the region. Europe is forecasted to have 29% of the global Infrastructure Edge footprint by 2028, with over half being in Western Europe.

Countries like Germany, France and the United Kingdom are predicted to be major contributors to Infrastructure Edge deployments in Europe It is predicted that by 2028, 20.5% of the global Infrastructure Edge will be deployed in North America. North America is heralding the early adoption of the Infrastructure Edge, buoyed by its high technology industry as well as dominance in the internet and cloud computing. However, in the medium term, Asia Pacific and Europe will demand an increasing percentage of the global Infrastructure Edge, primarily because of their larger populations. Amongst the other regions, 7% of the global Infrastructure Edge will be deployed in Latin America by 2028, with significant investments in countries like Brazil and Mexico. The remaining 5.8% in 2028 is predicted to be deployed in the Middle East and African regions.

Digital service adoption accelerated in 2020 with specific use cases to address challenges created by the COVID-19 pandemic. This accelerated adoption is expected to have long-term implications for the edge computing market and the use cases that come to the fore. It is forecasted that by 2028, 36.5% of the global Infrastructure Edge footprint will be for use cases associated with mobile and residential consumers. This is down from the 45.1% share that was forecasted earlier and reflects increased edge services adoption in other verticals.

In 2028, it is forecast that 11.9% of the global Infrastructure Edge footprint will be associated with Enterprise IT use cases. In the short to medium term, infrastructure edge demand for Enterprise IT will be driven by cloud service use cases that are complemented and enhanced with edge computing capabilities. However, it is predicted that in the long-term, Infrastructure Edge demand will be driven by “edge native” use cases that can only function when edge computing capabilities are available. These edge native use cases depend on the maturation of key technologies, such as augmented and virtual reality, and autonomous systems, such as those for closed loop enterprise IT functions.

CSPs have driven early Infrastructure Edge demand as they virtualize and cloudify their networks. Initially core and transport networks are being virtualized with standards like NFV and SDN. This is generally a precursor for end-to-end network transformation that incorporates access network virtualization, such as Cloud Radio Access Networks (C-RAN). In addition, CSPs are uniquely positioned with geographically distributed network infrastructure, which is well suited for Infrastructure Edge implementations. Many CSPs are implementing Multi-access Edge Computing (MEC) technology to bring a variety of network-centric capabilities, often in partnership with third parties, including cloud service providers like Amazon Web Services (AWS), Microsoft Azure and Google Cloud Platform (GCP). In 2028, it is forecast that 10.9% of infrastructure edge deployments will support CSP use cases.

Manufacturers have been pursuing multi-year strategies to implement digital services under the guise of frameworks such as Industry 4.0. The digital service priorities of manufacturers vary depending on their market conditions, operational objectives, product complexity, precision and customization demands, as well as the extent of brownfield and greenfield manufacturing facilities. For example, the automotive industry is confronted with tremendous disruption from companies like Tesla and has seen a surge in demand for electric vehicles. Electric vehicles require greenfield manufacturing facilities. Because these facilities are greenfield, they typically incorporate advanced digital operations that capitalize on advancements in automation, wireless connectivity and autonomous systems, such as autonomous mobile robots. This requires extensive edge computing functionality, the lion’s share of which is expected to reside at the Device Edge, particularly for Operational Technologies (OT).

However, use cases that span geographical areas beyond the boundaries of individual factories, such as warehousing, supply chain and logistics, will also require Infrastructure Edge capabilities. By 2028, it is forecast that 6.2% of the global Infrastructure Edge will support manufacturing-related use cases. Smart cities have been on the horizon for many years and are now using a range of digital services, such as those relating to security and surveillance, city operations (e.g., waste management) and traffic management. As more cities implement digital services, their value propositions become better understood and easier to justify elsewhere. While the Device Edge addresses many smart city use case demands, it is forecast that 6.1% of the global Infrastructure Edge in 2028 will support smart-city use cases. These use cases include smart buildings, lighting and traffic management and other digital services for public safety, venues and city operations.

Traditional retail companies are under tremendous pressure to innovate as ecommerce solutions grow in popularity. Permanent consumer behavior changes that favor ecommerce are likely to prevail after the COVID-19 pandemic and will continue to compromise traditional retail. Service continuity is important for retail, particularly for point-of-sale payment systems, which generally depend on Device Edge capabilities. Infrastructure Edge solutions are used for a range of use cases, including digital signage and Digital Out-ofHome (DOOH) experiences, immersive in-store solutions, proximity marketing and supply chain optimization. In 2028, it is forecast that 4.6% of the global Infrastructure Edge footprint will be associated with traditional retail use cases.

Advancements in energy utility technologies and renewables with smart grids, microgrids and distributed energy storage, are driving edge computing demands, both at the device and infrastructure edges. In 2028, it is forecast that 4.6% of the global Infrastructure Edge will support use cases associated with smart grids.

Edge computing is only possible if the underlying critical infrastructure is performant, redundant and seamlessly integrated. As the old saying goes, a chain is only as strong as its weakest link and this could not be a more fitting commentary for the world of internet-based computing infrastructure. Each and every piece of the puzzle has a role to play and if one part breaks down, things can go wrong in a hurry. At the edge, critical infrastructure is diverse, vendor-neutral and comes in various form factors. Each layer is built on the other and forms a synergistic relationship. From the underlying wholesale data centre to the cloud infrastructure that it houses; to the multiple sources of connectivity that connect end users and move data from the core to the edge; to the real estate that is able to support all these complex requirements. At the edge, each critical infrastructure building block is important in and of itself, but they work together as part of a single integrated ecosystem.

There are many form factors of Edge Clouds that are shaping up in the industry. They are

1. Regional Data Centers

Regional data centers have existed for some time, but are gaining prominence as cloud infrastructure moves out to the edge. These facilities are frequently found in second-tier markets that are generally underserved from an infrastructure perspective. Regional data centers rarely attain the size and density of hyperscale data centers, but their location in second tier metros makes them convenient to deliver infrastructure in smaller increments

2. Interconnection-orientated data centers

Interconnection-oriented data centers offer data center capacity that is also equipped with extensive interconnection capabilities. The greater the density of networks and clouds available through an interconnection fabric, the more options and flexibility become available to end users to create hybrid environments or to build between the core and edge.

3. Micro-modular edge data centers

Micro-modular edge data centers are smaller facilities that range in size from street-side cabinets to cargo container-like structures that house limited quantities of server infrastructure. By being built in a factory and having a smaller form factor than typical data centers, micro-modular data centers are relatively easy to move and deploy, which makes them a good fit for housing IT infrastructure at the edge. The common thread is proximity to wireless and terrestrial connectivity, resulting in maximum performance and the ability to backhaul traffic to the core

4. Streetside Cabinets

Streetside Cabinets represent the smallest data center form factor at the edge. These cabinets are designed to hold anywhere from a quarter-rack to two full racks of modern data center equipment in highly-remote locations.

5. Telco Edge Cloud (TEC)

Telco Edge Cloud (TEC) Telecom Operator assets like Wireless towers and cable headends have quickly become key infrastructure components of the edge computing ecosystem. These facilities provide a crucial point of connectivity, as they are the “jumping off” point for the last mile networks. Because of that, the real estate that surrounds these facilities is an ideal place for edge compute infrastructure to reside. Placing compute infrastructure in close proximity to a wireless tower or cable headend can minimize the distance traveled between the last mile network and the primary processing functions of an application that is housed in an edge data center on the same property. The end result of computing at the edge in this fashion is significantly reduced latency and improved performance

Edge facilities will also create new types of interconnection and peering. Similar to how data centers became destinations and meeting points for networks, the micro data centers at wireless towers and cable headends that will power edge computing often sit at the crossroads of terrestrial connectivity paths. These locations will become centers of gravity for local interconnection and edge exchange, creating new and newly efficient paths for data.

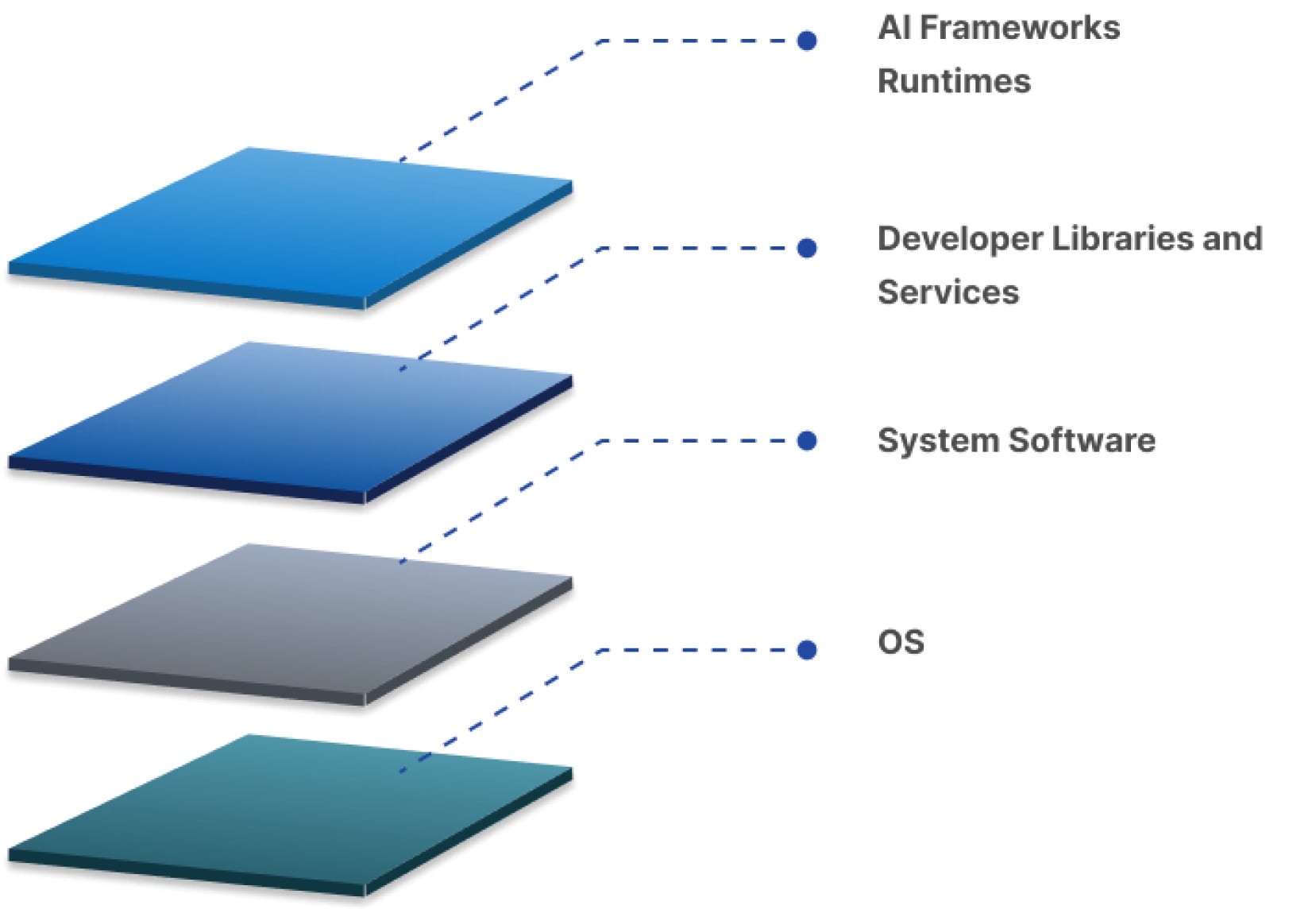

Software is at the heart of edge computing. It’s how applications are delivered, how edge hardware is managed and how workloads move around networks. The ecosystem is building a new stack to run at the edge of our networks, taking lessons from the hyperscale cloud, from IoT, from metro data centers, and from content delivery networks and the web, mashing them all together and building something new to suit new hardware, new networks, and a new generation of applications.

Cloud native technologies and the DevSecOps movement are having a significant impact on the way users manage systems and software, allowing common abstractions to deliver software at the edge and automate their operations. Broadly speaking, one can think of the edge stack as three distinct layers that require different engineering skills. At the lowest level is the systems layer, populated by firmware, operating systems and hypervisors, providing the technologies needed to work directly with edge hardware, whether IoT-class devices on the user edge or an increasing collection of specialized infrastructure at the service provider edge.

The next layer encompasses implementation and management. This is where tools like open source Kubernetes and OpenStack, or Red Hat’s OpenShift and RDO (RPM Distribution of OpenStack) operate, providing the services needed to support modern applications. This space is expanding to include specific edge solutions with platform-level support for event-based operations. At the top sits tooling designed to deploy and operate applications. Built into Continuous Integration/ Continuous Deployment (CI/CD) pipelines and using methodologies like GitOps, they provide a layer that allows effective management of distributed applications at the scale needed for edge networks.

Across all three layers is the need for a common observability layer, providing information tailored to the needs of different stakeholders. Traditional logging and monitoring services are still a key component of modern application operations, with tools like the ELK stack (Elasticsearch, Logstash and Kibana) offering log consolidation, querying and dashboards and Prometheus providing monitoring. Using ML alongside log and metric analysis allows prediction of failures and spotting of security breaches. Edge technologies are particularly amenable to using cloud native tooling to provide an alternative approach to management and control, as much management tooling isn’t designed for distributed architectures or for the global scale that can be necessary for edge architectures.

Running real-time workloads across the highly-distributed infrastructure presented by edge computing introduces many complicated challenges to developers and operators. How do you decide which workloads should run where? How do you handle failovers and geo-redundancy? How do you move services in response to devices in motion, such as to maintain continuity and service-guarantees with a mobile device moving across a geography, as in a drone or autonomous vehicle?

Many orchestration technologies, open source and otherwise, have emerged to tackle these types of complex scheduling problems. These orchestration systems take into account increasingly sophisticated levels of edge criteria for workload placement, automating decisions in real-time, abstracting away the complexity from developers and operators, who would prefer to simply specify the SLAs they require. A custom scheduler for edge computing might contemplate many sophisticated attributes requested by workloads. In addition to the typical scheduling attributes such as requirements around processor, memory, operating system, and occasionally some simple affinity/anti-affinity rules, edge workloads might also specify some or all of the following like Geolocation, Latency, Bandwidth, Resilience and/or risk tolerance (i.e., how many 9s of uptime), Data sovereignty ,Cost, Real-time network congestion, Requirements or preferences for specialized hardware (e.g., GPUs, FPGAs, etc.)

New networking and data center systems have begun delivery telemetry via real-time data feeds. Modern scheduling algorithms can ingest this telemetry and use it to make real-time and predictive workload placement decisions that spare the developer from needing to understand the underlying complexity.

Hence, there is a strong requirement to develop the Edge Computing Orchestration software by leveraging open source technologies (Linux Foundation Akriano Project, Kubernetes and others) and customizing to our needs. It is very important to participate in these projects and perform PoCs to evaluate the applications, harden the software stack completely and deploy to be the leading provider of Edge Computing Infrastructure in African continent.

Applications with such demands are use cases like Autonomous platooning of truck convoys will likely be one of the first use cases for autonomous vehicles. Here, a group of trucks travel close behind one another in a convoy, saving fuel costs and decreasing congestion. With edge computing, it will be possible to remove the need for drivers in all trucks except the front one, because the trucks will be able to communicate with each other with ultra-low latency. It has use cases in the Oil and gas industry where Oil and gas failures can be disastrous. Their assets therefore need to be carefully monitored. However, oil and gas plants are often in remote locations. Edge computing enables real-time analytics with processing much closer to the asset, meaning there is less reliance on good quality connectivity to a centralized cloud.

It enables Smart grid where Edge computing will be a core technology in more widespread adoption of smart grids and can help allow enterprises to better manage their energy consumption. Sensors and IoT devices connected to an edge platform in factories, plants and offices are being used to monitor energy use and analyze their consumption in real-time. With real-time visibility, enterprises and energy companies can strike new deals, for example where high-powered machinery is run during off-peak times for electricity demand. This can increase the amount of green energy (like wind power) an enterprise consumes.

It helps in Predictive Maintenance where manufacturers want to be able to analyze and detect changes in their production lines before a failure occurs. Edge computing helps by bringing the processing and storage of data closer to the equipment. This enables IoT sensors to monitor machine health with low latencies and perform analytics in real-time. Healthcare contains several edge opportunities. Currently, monitoring devices (e.g. glucose monitors, health tools and other sensors) are either not connected, or where they are, large amounts of unprocessed data from devices would need to be stored on a 3rd party cloud. This presents security concerns for healthcare providers. An edge on the hospital site could process data locally to maintain data privacy. Edge also enables right-time notifications to practitioners of unusual patient trends or behaviours (through analytics/AI), and the creation of 360-degree view patient dashboards for full visibility.

The other use case is that Operators are increasingly looking to virtualise parts of their mobile networks (vRAN). This has both cost and flexibility benefits. The new virtualized RAN hardware needs to do complex processing with a low latency. Operators will therefore need edge servers to support virtualizing their RAN close to the cell tower. Cloud gaming, a new kind of gaming which streams a live feed of the game directly to devices, (the game itself is processed and hosted in data centres) is highly dependent on latency. Cloud gaming companies are looking to build edge servers as close to gamers as possible in order to reduce latency and provide a fully responsive and immersive gaming experience.

It helps in Content delivery where By caching content – e.g. music, video stream, web pages – at the edge, improvements to content delivery can be greatly improved. Latency can be reduced significantly. Content providers are looking to distribute CDNs even more widely to the edge, thus guaranteeing flexibility and customisation on the network depending on user traffic demands. Edge computing can enable more effective city traffic management. Examples of this include optimizing bus frequency given fluctuations in demand, managing the opening and closing of extra lanes, and, in future, managing autonomous car flows.

With edge computing, there is no need to transport large volumes of traffic data to the centralized cloud, thus reducing the cost of bandwidth and latency.

Edgedock develops innovative AI and software solutions to address security and digitisation challenges for businesses globally. All our solutions are powered by new-age technologies such as ML/AI/DL, Robotic Process Automation, Cloud Computing and Edge Computing: