Explore our library of tools, guides, and insights to

take your knowledge and skills to the next level.

Edge computing is the practice of moving compute power physically closer to where data is generated, usually an Internet of Things device or sensor. Named for the way compute power is brought to the edge of the network or device, edge computing allows for faster data processing, increased bandwidth and ensured data sovereignty. By processing data at a network’s edge, edge computing reduces the need for large amounts of data to travel among servers, the cloud and devices or edge locations to get processed.

We see the rise of Artificial Intelligence (AI) technology and applications being introduced into public and private sector enterprises globally. The major reason for this is the availability of infrastructure that can perform heavy CPU/GPU operations at very low-cost compared to previous days and also the advancements in AI like Deep Learning methods where we train the models sufficiently where it forms an Artificial Neural Network (ANN) intelligence in multiple layers. This intelligence can now be applied to various forms of input like text, voice or video to parse and provide advanced analytics for various use cases.

While Edge Computing and AI are completely different domains and can be serving different use cases, the recent trend is that AI applications are being deployed on the edge computing infrastructure to solve many real-world use cases. The combined term to jointly refer to this kind of deployment is called EdgeAI in the industry. Due to this, we are able to provide Intelligence at the Edge which is very close to the enterprises and consumers.

In this blog, we explore the concept of Edge AI, technologies involved, how it is different from Cloud AI with the help of an example and many use cases enterprises can benefit from.

Edge Artificial Intelligence (Edge AI) is a paradigm for crafting AI workflows that span centralized data centers (the cloud) and devices outside the cloud that are closer to humans and physical things (the edge). This stands in contrast to the more common practice in which the AI applications are developed and run entirely in the cloud, which people have begun to call cloud AI. A more helpful way to understand the importance of edge is as a means of extending digital transformation practices innovated in the cloud out to the world.

Until recently, most AI applications were developed using symbolic AI techniques that hard-coded rules into applications, such as expert systems or fraud detection algorithms. In some cases, nonsymbolic AI techniques, such as neural networks, were developed for an application, such as optical character recognition for check numbers or typed text.

Over time, researchers discovered ways to scale up deep neural networks in the cloud for training AI models and generating responses based on input data, which is called inferencing. Edge AI extends AI development and deployment outside of the cloud.

In general, edge AI is used for inferencing, while cloud AI is used to train new algorithms. Inferencing algorithms require significantly less processing capabilities and energy than training algorithms. As a result, well-designed inferencing algorithms are sometimes run on existing CPUs or even less capable microcontrollers in edge devices. In other cases, highly efficient AI chips improve inferencing performance, and reduce power or both.

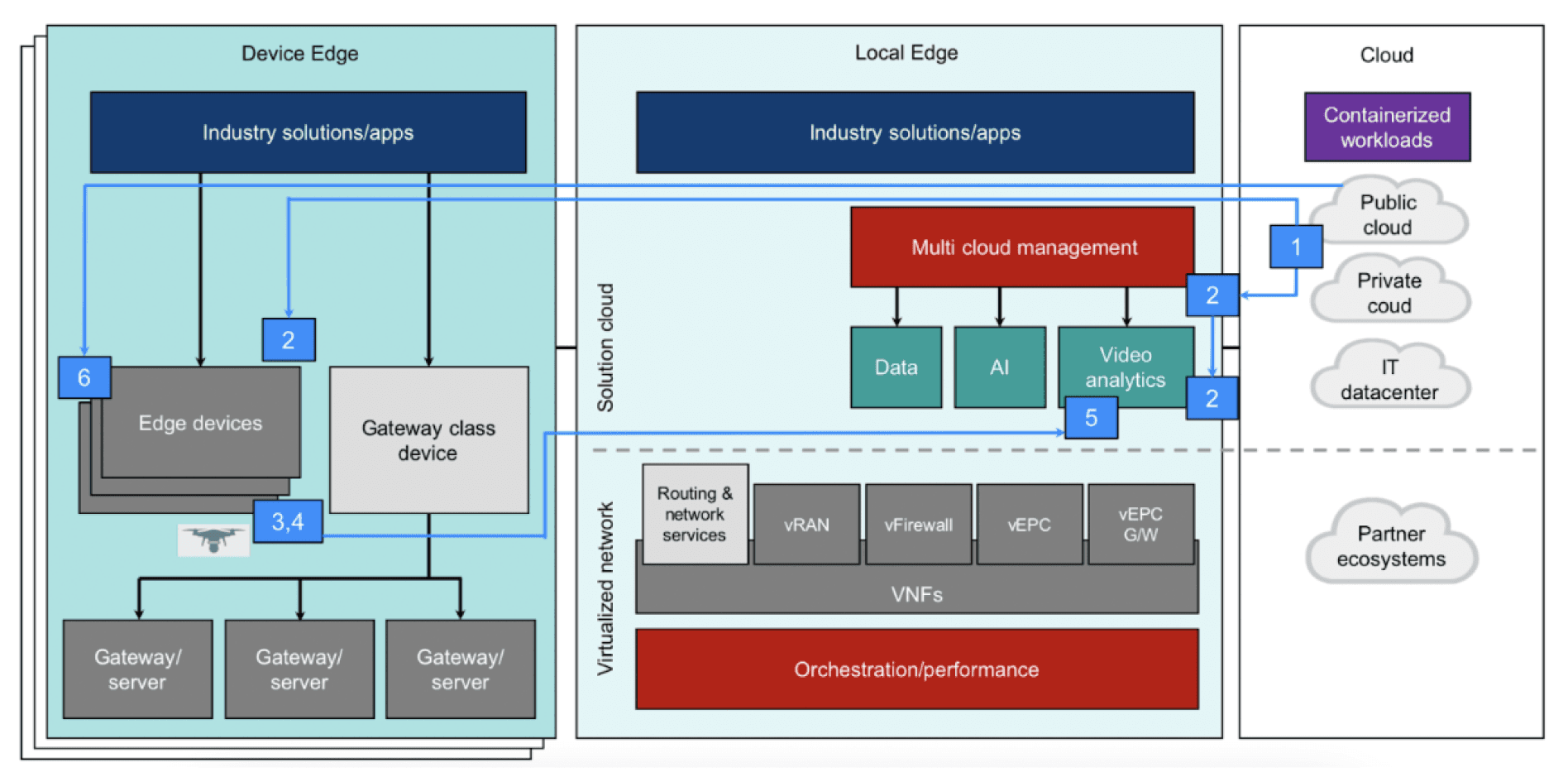

Similar to Edge Computing and Cloud Computing, this paragraph discusses the specific considerations for deploying AI applications in both these clouds. The distinction between Edge AI and Cloud AI needs to start with how these ideas evolved. There were mainframes, desktop computers, smartphones and embedded systems long before there was a cloud or an edge. The applications for all these devices were developed at a slow pace using Waterfall development practices. Teams attempted to cram as much functionality and testing as possible into annual updates. The cloud brought attention to various ways to automate many data center processes. This allowed teams to adopt more Agile development practices. Some large cloud applications now get updated dozens of times a day. This makes it easier to develop application functionality in smaller chunks. The concept of the edge suggests a way of extending these more modular development practices beyond the cloud to edge devices. Edge AI focuses on extending AI development and deployment workflows to run on mobile phones, smart appliances, autonomous vehicles, factory equipment and remote edge data centers.

There are different degrees of this. At a minimum, an edge device like a smart speaker may send all the speech to the cloud. More sophisticated edge AI devices, such as 5G access servers, could provide AI capabilities to nearby devices. The Linux Foundation’s LF Edge group includes light bulbs, mobile devices, on-premises servers, 5G access devices and smaller regional data centers as different types of edge devices.

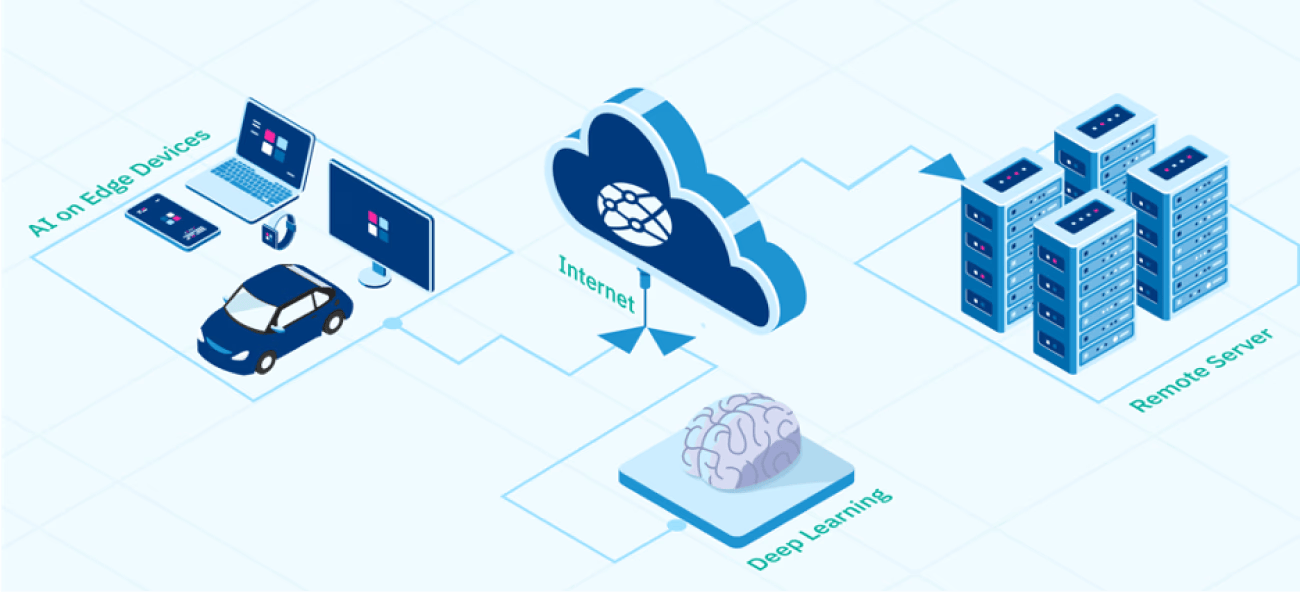

Edge AI and cloud AI work together with various contributions from the edge or the cloud in both training and inferencing. At one end, the edge device sends raw data to the cloud for inference and waits for a response. In the middle, the edge AI may run inference locally on the device using models trained in the cloud. At the other end, the edge AI may also play a larger role in training the AI models as well.

Let us see a use case which will provide an overview on how Edge Computing, AI and Cloud Computing can work together to solve the real-world use cases.

Deep Learning has unlocked the potential of three transformative technologies: Computer Vision, Natural Language Processing (NLP), and Automated Predictions. By leveraging Deep Learning techniques, industries are witnessing unprecedented advancements in image recognition, object detection, language understanding, translation, sentiment analysis, and predictive analytics. As Deep Learning continues to evolve, it promises to reshape industries, improve user experiences, and drive innovation in the digital age. Embracing the power of Deep Learning is the key to unlocking new possibilities and achieving breakthroughs in a wide range of domains.

Edgedock develops innovative AI and software solutions to address security and digitisation challenges for businesses globally. All our solutions are powered by new-age technologies such as ML/AI/DL, Robotic Process Automation, Cloud Computing and Edge Computing: